Work in progress

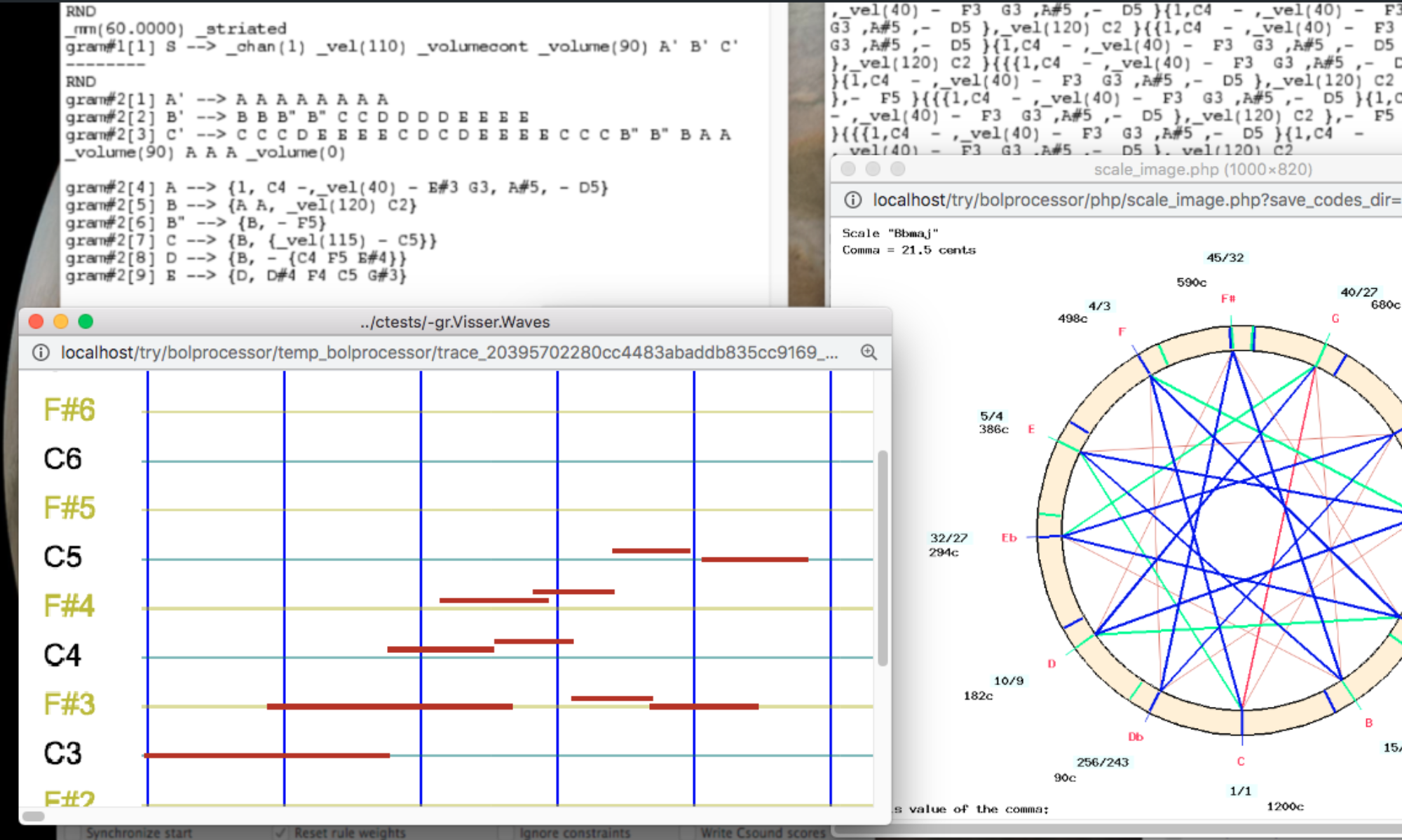

Capturing MIDI input opens the way to "learning" from the performance of a musician or another MIDI device. The first step is to use the captured incoming NoteOn/Noteoff events, and optionally ControlChange and PitchBend events, to build a polymetric structure that reproduces the stream.

The difficulty of this task lies in the design of the most significant polymetric structure — for which AI tools may prove helpful in the future. Proper time quantization is also needed to avoid overly complicated results.

We've made it possible to capture MIDI events while other events are playing. For example, the output stream of events can provide a framework for the timing of the composite performance. Consider, for instance, the tempo set by a bass player in a jazz improvisation.

The _capture() command

A single command is used to enable/disable a capture: _capture(x), where x (in the range 1…127) is an identifier of the 'source'. This parameter will be used later to handle different parts of the stream in different ways.

_capture(0) is the default setting: input events are not recorded.

The captured events and the events performed on them are stored in a 'capture' file in the temp_bolprocessor folder. This file is processed by the interface.

(Examples are found in the project "-da.tryCapture".)

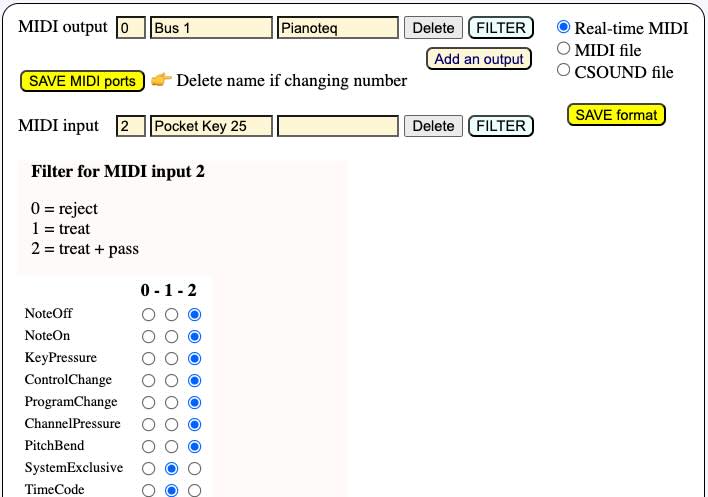

The first step to use _capture() is to set up the MIDI input and more specifically its filter. It should at least treat NoteOn and NoteOff events. ControlChange and PitchBend messages can also be captured.

If the pass option is set (see picture), incoming events will also be heard on the output MIDI device. This is useful if the input device is a silent device.

It is possible to create several inputs connected to several sources of MIDI events, each one with its own filter settings. Read the Real-time MIDI page for more explanations.

Another important detail is the quantization setting. If we want to construct polymetric structures, it may be important to set the data to the nearest multiple of a fixed duration, typically 100 milliseconds. This can be set in the settings file "-se.tryCapture".

Simple example

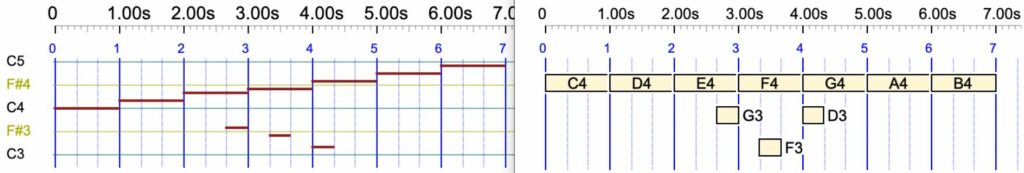

Let us take a look at a very simple example of a capture on top of a performance.

C4 _D4 _capture(104) E4 F4 G4 _capture(0) A4 B4

The machine will play the sequence of notes C4 D4 E4 F4 G4 A4 B4. It will listen to the input while playing E4 F4 G4. It will record both the sequence E4 F4 G4 and the notes received from a source tagged "104".

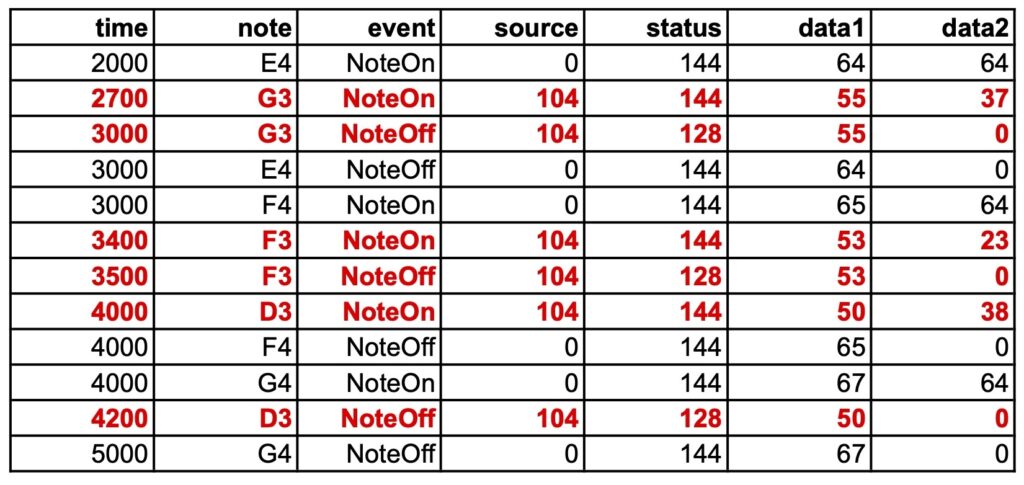

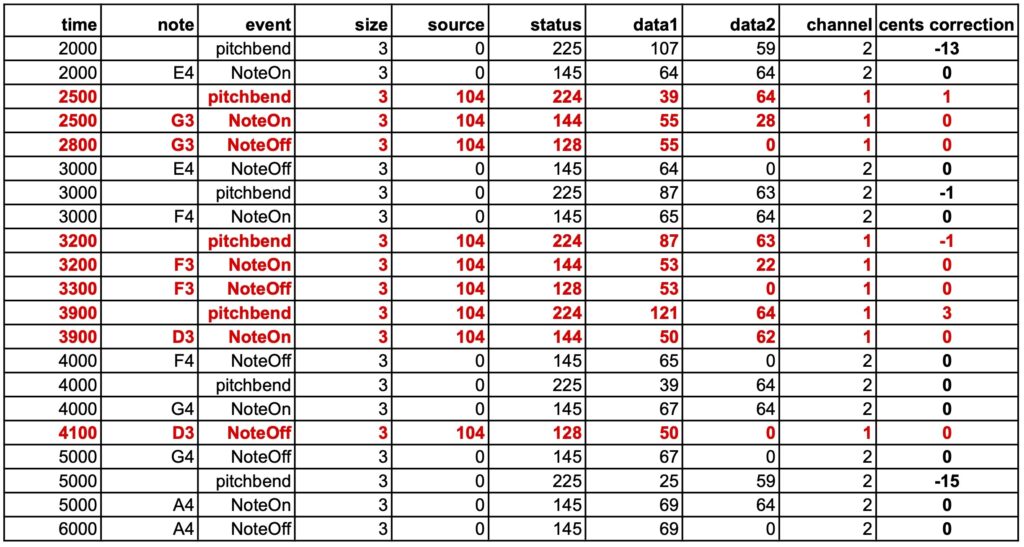

Suppose that the sequence G3 F3 D3 was played on top of E4 F4 G4. The capture file might look like this:

It should be noted that all the dates are approximated to multiples of 100 milliseconds, i.e. the quantization. For example, the NoteOff of input note G3 falls exactly on the date 3000 ms, which is the NoteOff of the played note E4.

The recording of input and played notes starts at note E4 and ends at note G4, as specified by _capture(104) and _capture(0).

An acceptable approximation of this sequence would be the polymetric expression:

C4 D4 {E4 F4 G4, - - G3 - F3 - D3 - -} A4 B4

Approximations will be automatically created from the capture files at a later stage.

Pause and capture

MIDI input will continue to be captured and timed correctly after the Pause button is clicked. This makes it possible to play the beginning of a piece of music and stop exactly where an input is expected.

Combining 'wait' instructions

Try:

_script(wait for C3 channel 1) C4 D4 _capture(104) E4 F4 G4 _script(wait for D3 channel 1) _capture(0) A4 B4

The recording takes place during the execution of E4 F4 G4 and during the unlimited waiting time for note D3. This allows events to be recorded even when no events are being played.

The C3 and D3 notes have been used for ease of access on a simple keyboard. The dates in the capture file are not incremented by the wait times.

The following is a setup for recording an unlimited sequence of events while no event is being played. Note C0 will not be heard as it has a velocity of zero. Recording ends when the STOP or PANIC button is clicked.

_capture(65) _vel(0) C0 _script(wait forever) C0

Interpreting the recorded input as a polymetric structure will be made more complex by the fact that no rhythmic reference has been provided.

Microtonal corrections

In the following example, both input and output receive microtonal corrections of the just intonation scale.

_scale(just intonation,0) C4 D4 _capture(104) E4 F4 G4 A4 _capture(0) B4

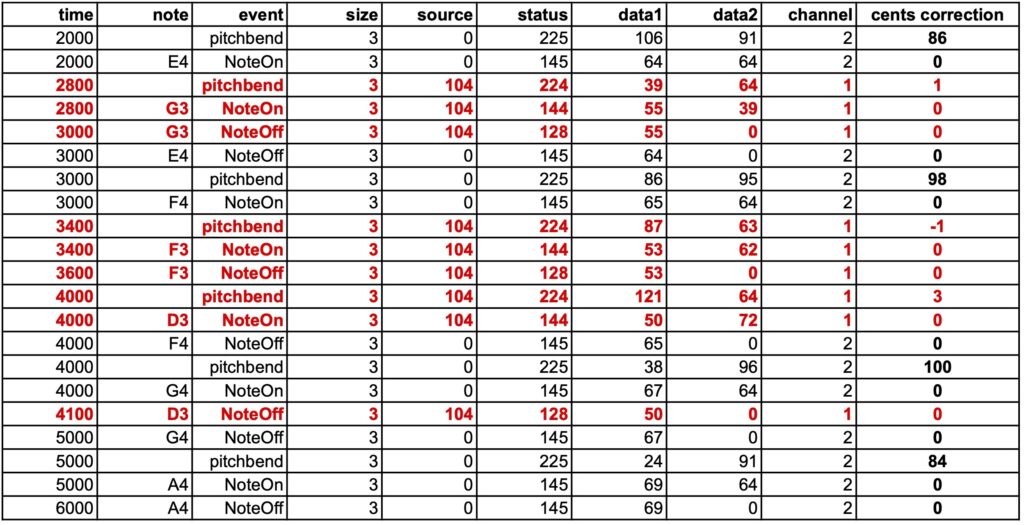

Below is a capture file obtained by entering G3 F3 D3 over the sequence E4 F4 G4 A4. Note the pitchbend corrections in the last column, which indicate microtonal adjustments. The relevant values are those that precede NoteOns on the same channel.

The output events (source 0) are played on MIDI channel 2, and the input events (source 104) on MIDI channel 1. More channels will be used if output notes have an overlap — see the page MIDI microtonality. In this way, pitchbend commands and the notes they address are distributed across different channels.

Added pitchbend

In the following example, a pitchbend correction of +100 cents is applied to the entire piece. It does modify output events, but it has no effect on input events.

_pitchrange(200) _pitchbend(+100) _scale(just intonation,0) C4 D4 _capture(104) E4 F4 G4 A4 _capture(0) B4

Again, after playing G3 F3 D3 over the sequence E4 F4 G4 A4:

Pitchbend corrections applied to the input (source 104) are only those induced by the microtonal scale. Pitchbend corrections applied to the output (source 0) are the combination of microtonal adjustments (see previous example) and the +100 cents of the pitchbend command.

Capturing and recording more events

The _capture() command allows you to capture most types of MIDI events: all 3-byte types, and the 2-byte type Channel pressure (also called Aftertouch).

Below is a (completely unmusical) example of capturing different messages.

The capture will take place in a project called "-da.tryReceive":

_script(wait for C0 channel 1) _capture(111) D4 _pitchrange(200) _pitchbend(+50) _press(35) _mod(42) D4 _script(wait forever)

The recording will have a "111" marker to indicate which events have been received. Only two notes D4 are played during the recording, the second one is raised by 50 cents and has a channel pressure of 35 and a modulation of 42.

After playing back the two D4s, the machine will wait until the STOP button is clicked. This gives the other machine time to send its own data and have it recorded.

The second machine is another instance of BP3 — actually another tag on the interface's browser with the "-da.trySend" project:

{_vel(0) <<C0>>} G3 _press(69) _mod(5430) D3 _pitchrange(200) _pitchbend(+100) E3

This project will start by sending "{_vel(0) <<C0>>} " which is the note C0 with velocity 0 and duration null (an out-time object). This will trigger "-da.tryReceive" which waited for C0. The curly brackets {} restrict velocity 0 to the note C0. Outside of this expression, the velocities are set to their default value (64). In this data, channel pressure, modulation and a pitchbend correction of +100 cents are applied to the final note E3.

The resulting sound is terrible, you've been warned:

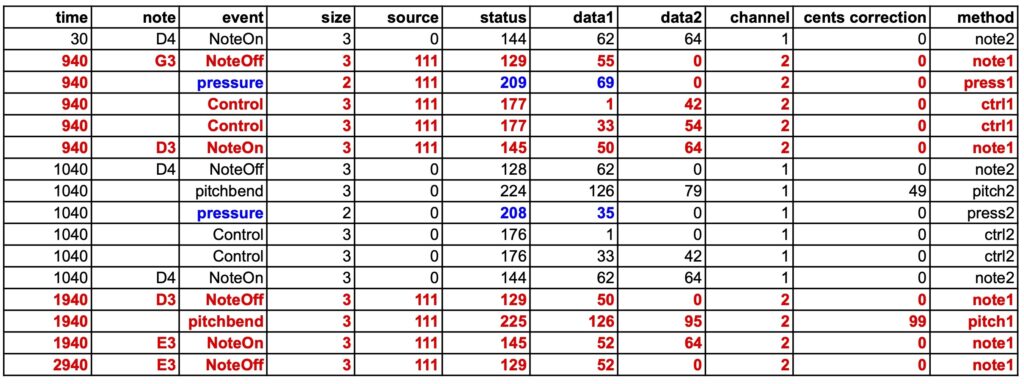

However, the 'capture' file shows that all events have been correctly recorded:

Captured events (from "-da.trySend") are coloured red. They have been automatically assigned to MIDI channel 2, so that corrections will not be mixed between performance and reception.

The pitchbend corrections are shown in the "cents correction" column, each applied to its own channel.

The channel pressure corrections (coloured blue) display the expected values. The modulation corrections (in the range 0 to 16383) are divided into two 3-byte messages, the first carrying the MSB and the second the LSB.

There is a time mismatch of approximately 150 milliseconds between the expected and actual dates, but the durations of the notes are accurate. The mismatch is caused by the delay in the transmission of events over the virtual port. The data looks better if the quantization in the "-da.tryReceive" project is set to 100 ms instead of 10 ms. However, this is of minor importance, as a "normalisation" will take place during the (forthcoming) analysis of the "capture" file.

For geeks: The last column indicates where events have been recorded in the procedure sendMIDIEvent(), file MIDIdriver.c.

Capture events without the need to perform

The setup of the "-da.tryReceive" project should be for example:

_capture(99) _vel(0) C4 _script(wait forever)

Note C4 is not heard due to its velocity 0. It is followed with all MIDI events received by the input until the STOP button is clicked. This makes it possible to record an entire performance. The procedure can also be checked with items produced and performed by the Bol Processor.

Note that the inclusion of pitchbend messages makes it possible to record music played on microtonal scales and (hopefully) identify the closest tunings suitable for reproduction of the piece of music.

For example, try to capture the following phrase from Oscar Peterson's Watch What Happens:

_tempo(2) {4, {{2, F5 Bb4 C5 C4} {3/2, D4} {1/2, Eb4 Db4 D4}, {F4, C5} {1/2, F4, G4} {1/2, F3, G3} {2, Gb3}}}, {4, {C4 {1, Bb3 Bb2} {2, A2}, {D3, A3} {1, Eb3 Eb2} {2, D2}}}

A dialogue box appears to analyse or download the captured data:

The result is complex, but it's going to lend itself to being analysed automatically.

The raw table is available to download (as an image) here. The current analysis of this example is available here.

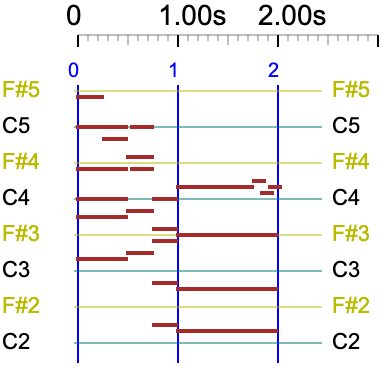

Once the origin of dates has been set to the first NoteOn or NoteOff received, the result is — only important columns are displayed: time, note, event, key, velocity:

0 F5 NoteOn 77 64

0 F4 NoteOn 65 64

0 C5 NoteOn 72 64

0 C4 NoteOn 60 64

0 D3 NoteOn 50 64

0 A3 NoteOn 57 64

230 F5 NoteOff 77 0

230 Bb4 NoteOn 70 64

470 Bb4 NoteOff 70 0

470 C5 NoteOff 72 0

470 C5 NoteOn 72 64

470 F4 NoteOff 65 0

470 C5 NoteOff 72 0

470 F4 NoteOn 65 64

470 G4 NoteOn 67 64

470 C4 NoteOff 60 0

470 Bb3 NoteOn 58 64

470 D3 NoteOff 50 0

470 A3 NoteOff 57 0

470 Eb3 NoteOn 51 64

710 C5 NoteOff 72 0

710 C4 NoteOn 60 64

710 F4 NoteOff 65 0

710 G4 NoteOff 67 0

710 F3 NoteOn 53 64

710 G3 NoteOn 55 64

710 Bb3 NoteOff 58 0

710 Bb2 NoteOn 46 64

710 Eb3 NoteOff 51 0

710 Eb2 NoteOn 39 64

960 C4 NoteOff 60 0

960 D4 NoteOn 62 64

960 F3 NoteOff 53 0

960 G3 NoteOff 55 0

960 F#3 NoteOn 54 64

960 Bb2 NoteOff 46 0

960 A2 NoteOn 45 64

960 Eb2 NoteOff 39 0

960 D2 NoteOn 38 64

1730 D4 NoteOff 62 0

1730 Eb4 NoteOn 63 64

1830 Eb4 NoteOff 63 0

1830 C#4 NoteOn 61 64

1880 C#4 NoteOff 61 0

1880 D4 NoteOn 62 64

1960 D4 NoteOff 62 0

1960 F#3 NoteOff 54 0

1960 A2 NoteOff 45 0

1960 D2 NoteOff 38 0

The next task on our agenda will be to analyse the 'captured' file and reconstruct the original polymetric expression (shown above) or an equivalent version. Then we can consider moving on to grammars, similar to what we've done with imported MusicXML scores (read page).

Constructing a polymetric expression from an arbitrary stream of notes will hopefully be achieved with the help of graph neural networks. The idea is to train the language model with a set of sound files, MIDI files, and their 'translations' as polymetric expressions. Read the AI recognition of polymetric notation page to follow this approach.